Challenges with deep learning in glaucoma

Corresponding adress:Leopold Schmetterer, Singapore

Introduction

Imaging is an essential part of glaucoma care. In the recent years, optical coherence tomography has to a large degree replaced earlier technologies such as fundus photography or scanning laser ophthalmoscopy, due to its unprecedented resolution, three-dimensional representation of tissue and good reproducibility. From images either semantic features defined by human experts (e.g., fiber bundle defects, optic disc hemorrhages, cup/disc ratio at the slitlamp) or agonistic features defined by equations (ganglion cell complex, nerve fiber layer thickness, minimum rim width) are extracted. Semantic features may provide good specificity; however, they depend on the level of experience, differ between different experts and are often time consuming to obtain. Agonistic features may have limited specificity, but usually have little inter-observer variability. Different agonistic features can in principle be combined, thereby offering improved diagnostic performance.

Some agonistic features related to the disease process, such as loss of retinal ganglion cells and their axons, are part of standard diagnosis in glaucoma. Other characteristic features, such as optic nerve head morphology or vascular changes, are not considered, although this information is present in OCT images. Machine learning approaches use agonistic features with a blackbox approach, and not based on mathematical description or modeling. Importantly, such an approach can also include non-imaging features such as visual field data, intraocular pressure and anatomical factors such as eye length. Glaucoma is a good candidate for machine learning approaches because of the poorly understood pathogenesis1 and the complex structure-function relationship (Malik et al. 2012).2 Moreover, OCT is an imaging modality that acquires tissue characteristics with almost histological resolution.

The concept of deep learning

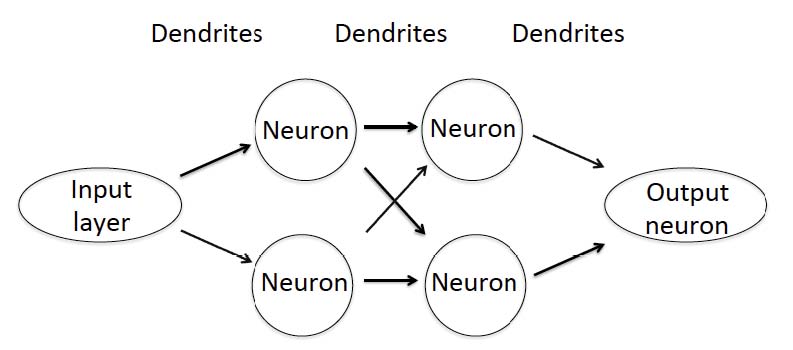

More than 10 million OCT retinal scans are obtained annually providing an enormous amount of data that cannot be categorized by human graders. Machine-based classification is now the aim of many research groups and diagnostic performance has improved rapidly. In 2012, a Convolutional Neural Network (CNN) was, for the first time, capable to defeat human graders in the ImageNet challenge,3 a large visual database designed for use in visual object recognition software research. Since then, the performance of new machine learning-based systems has continuously improved. Computer vision, referring to machine-based image analysis seeks to automate tasks that human visual systems can do including classification, detection, segmentation, and image enhancement. CNNs are algorithms used to solve computer vision tasks under the umbrella term Artificial Intelligence (AI). Machine learning is a subclass of AI and refers to the ability of learning without being programmed for specific models or equations; humans define imaging features and statistical analysis is used for classification. Deep learning (DL) on the other hand learns by itself which features are most suitable for classifying the data. The basis of most deep learning approaches is the Artificial Neural Network (ANN) that mimics biological neural networks including neurons, dendrites and axons. The first layer of an ANN is the input layer where the image data are entered (Fig. 1). The dendrites are assigned a random number at initialization and each input is multiplied by this random number. The results are then summed for each neuron and passed to the next layer until the final result is obtained in the output neuron. Increasing the number of layers usually improves the performance of the ANN, but increases the need for more input data.

Fig. 1. Schematic illustration of a simple artificial neural network (ANN) with two layers. Whereas ANNs that are used for AI-based solutions in image analysis are more complex and use more layers the working principle still remains the same.

Problems of deep learning in glaucoma

The number of images required for DL approaches highly depends on the complexity of the network and the degrees of freedom. For optimal performance, it is considered that at least 100,000 images are required at the input layer, which is called training dataset. In this training dataset all stages of the disease and healthy control subjects are included. The outcome of the network depends on the quality of the images and careful phenotyping. It is desirable to achieve a balance between different stages of the disease. Lack of late stage patients is a common problem in the training datasets. In case of over-fitting of data there is a risk that the performance in the validation dataset is not as good as in the training dataset. To which degree networks trained with data from one ethnicity can also be used in populations consisting of other ethnicities is largely unknown. It is also unknown whether it is critical if the training dataset contains OCT images as obtained from only one specific machine.

Until now, AI-based approaches in medical care are narrow and are focused on one specific task. In performing this task, the network may be superior to humans for instance in estimating the risk of having glaucoma based on fundus photographs just as Goldmann tonometry is more accurate than palpation in measuring intraocular pressure. As such, performance may not be mixed up with competence. The many factors that are assessed by the physician during a patient examination include general appearance of the subject, presence of concomitant disease and estimation of patient’s adherence to medication are not within the scope of the ANN. Also, when novel imaging modalities become available that either provide better resolution, molecular contrast or functional tissue properties, previously trained networks become useless. Again, the network provides performance for one specific task in classifying images, but not competence in ocular imaging.

Implementing AI-based solutions in glaucoma care has several practical hurdles. The current system of reimbursement does not include machine-based image classification. Hence, there will be a continuous re-distribution of money within the health care system when machine learning solutions will be implemented. Related to this issue is the question who will assume liability in case of incorrect classification. If not all images are finally re-evaluated by human graders, it seems reasonable that the maker of the network will be responsible. This risk will be reflected in the retail price of the AI product. Economic considerations may also lead to different solutions depending on local insurance systems and the clinical needs in each country.

The cost issue is closely related to the application of AI-based systems. As mentioned above, such applications need to follow a well-defined task. Such tasks may include segmentation of OCT images,4 de-noising of OCT images,5 glaucoma diagnosis or progression analysis.6 In terms of diagnosis, the major problem is that OCT provides good performance for late-stage disease, but not for early stage disease.7 Whereas this might be improved with AI-based networks over classical approaches such a retinal nerve fiber layer thickness or ganglion cell mapping, the large number of false positives still remains a problem. A potential solution may be to use of AI systems that provide high sensitivity followed by human over-reading to increase specificity.

Before using AI-based systems, approval by the local authorities such as FDA or EMA will be required. The required performance for such solutions in glaucoma still needs to be defined. Whereas using machine learning for segmentation or de-noising of OCT images may be easily accepted, basing diagnosis or progression analysis on AI approaches is more critical. Clearly, clinical outcome studies comparing AI-based treatment decisions with classical approaches will increase the general acceptance. Whereas the current use of OCT in glaucoma care is easily comprehensible, AI-based solutions are black-box systems. This is a paradigm change, because the physician will not be able to decide whether a certain categorization is due to changes in retinal nerve fibers, retinal ganglion cells or other posterior pole structures. At the end of the day, however, it will be patient acceptance that decides when and to which degree AI-based solutions will be implemented into clinical practice.

Outlook AI-based classification of glaucoma is still in its very early stages. Preliminary data are promising even when relying only on fundus photographs.8 ANNs will not replace soon physicians for diagnosing glaucoma. AI-based solutions will, however, become part of glaucoma care in the near future and may bring us closer to the goal of cost-effective population-based glaucoma screening. Roy Amara from Institute for the Future (Palo Alto, CA) stated: ‘We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.’ This appears to be very true for AI in glaucoma and other ophthalmic diseases.

References

- Weinreb RN, Aung T, Medeiros FA. The pathophysiology and treatment of glaucoma: a review. JAMA. 2014;311:1901-1911.

- Malik R, Swanson WH, Garway-Heath DF. ‘Structure-function relationship’ in glaucoma: past thinking and current concepts. Clin Exp Ophthalmol. 2012;40:369-380.

- Krizhevsky A, Sutskever I, Hinton GE. ImageNet classification with deep convolutional neural networks. Proceedings of the 25th International Conference on Neural Information Processing Systems - Volume 1. Lake Tahoe, Nevada, Curran Associates Inc.; 2012:1097-1105.

- Devalla SK, Renukanand PK, Sreedhar BK, et al. DRUNET: a dilated-residual U-Net deep learning network to segment optic nerve head tissues in optical coherence tomography images. Biomed Opt Express. 2018a;9:3244-3265.

- Devalla SK, Subramanian G, Pham TH, et al. A Deep Learning Approach to Denoise Optical Coherence Tomography Images of the Optic Nerve Head. 2018b; arXiv preprint arXiv:1809.10589.

- Ting DSW, Pasquale LR, Peng L, et al. Artificial Intelligence and Deep Learning in Ophthalmology. Br J Ophthalmol. (In press.) Bussel II, Wollstein G, Schuman JS. OCT for glaucoma diagnosis, screening and detection of glaucoma progression. Br J Ophthalmol. 2014;98 Suppl 2:ii15-19.

- Ting DSW, Cheung CY, Lim G, et al. Development and Validation of a Deep Learning System for Diabetic Retinopathy and Related Eye Diseases Using Retinal Images From Multiethnic Populations With Diabetes. JAMA. 2017;318:2211-2223.